An original article by Decision Free Solutions

How to predict future behaviour of individuals as well as organisations

For the PDF file of the full article click on the download-icon on the left-hand side of this page or simply click here.

Note to the reader: This article is a chapter of the manuscript with the work title “Achieve aims with minimal resources by avoiding decision making — in Organisations, (Project) Management, Sales and Procurement (Everybody can manage risk, only few can minimise it)”. The article refers to other chapters, but can be read on its own. Other chapters are “On Decision Making”, “On experts and expert organisations”, “The four steps of DICE that will change the world” and “The five principles of TONNNO that will avoid decision making”.

[spacer height=”20px”]

This chapter can make your life both easier and happier

Experts can predict the future, close observers can predict future behaviour

If you are an expert in a certain situation, you know what is likely to happen next. When a toddler is sitting at the table, flinging arms, a tall glass at the edge of it, you can see it coming. We oversee the event’s conditions, and we are all experts when it comes to gravity. We are able to predict the future with some degree of certainty. The glass is likely to fall to pieces.

This chapter is not about predicting the future — something experts are good at — but about predicting future behaviour — something close observers can be good at. In this chapter it will be explained that:

- A whole range of behavioural characteristics are linked.

- That these behaviours are distinctly different for those with a high level of perceptibility versus those with a low level of perceptibility.

- If we observe behavioural characteristics which are consistent with each other, we can predict a whole range of other characteristics too (with a certain degree of certainty).

The degree of certainty depends on the clarity and the consistency of the observed behaviour. They are very distinct for both ends of the spectrum. There is no mistaking an expert for someone who is very poor in perceiving information.

But also when the observed behaviour is not so clear and consistent, we still will have learned something. We will be able to predict, with a lesser or greater degree of certainty, whether some person or organisation is more or less likely to help us achieve our desired outcome. This is information we can — and must — make use of. And everybody is able to observe at least some behaviour. It is what we do all the time.

Do we really need to go there?

This chapter is both superfluous and essential. Observation, analysis, arguments, common sense. Those are the tools many of us are familiar with and subscribe to. You may read this because you want to learn about a step-by-step approach, a certain method, something you can directly apply. Why start talking about predicting someone’s or some organisation’s behaviour?

The approach of Decision Free Solutions is about making expertise matter. In order for experts to be able to utilise expertise the non-experts have to stay away from trying to control the experts. This may sound logical enough on paper, but in practice this is not at all an easy thing to do. The following conversation is all too familiar to me: “So you should simply trust the expert?” “Well, no, not simply trust. The expert has to explain what has to happen why, and then you let the expert do it.” “And what if the expert then decides to do something else?” “An expert won’t do that.” “Why not?” “Er… because it is in the expert’s own interest to do a good job.” “But how can you be sure about this?”

This chapter will try to answer that last question.

Three reasons why this chapter is essential

This chapter is superfluous because the approach of Decision Free Solutions consists out of four steps and five principles. When applied carefully and rigorously it is ensured the best available expert is identified. This expert will have clarified how, with what activities, the desired outcome will be achieved. This expert will also periodically informs on plan progress and any eventual deviations. In theory there is no need to observe anybody’s characteristics.

This chapter is essential, however, for three reasons. First because “giving up control” and to “trust” the expert to properly execute the plan doesn’t come natural to us. A method is only a method after all. Someone may be identified as an expert, but surely an expert has interests too. In these and many other instances we would like to have some confirmation, some reassurances even, that we are indeed dealing with an expert or an expert organisation. This chapter explains what ability all experts and expert organisations have in common, and how this ability shines through in many characteristics, many of which we can readily observe.

The second reason is fairly similar to the first. Rather than identifying “the expert” per se we identify “the best expert that is available to us”. If we can, by simple observations, get a sense of “how much of an expert” our expert is (to what extent expert-behaviour is displayed), then this information can be used to our advantage. Are we in good hands, or must we be vigilant and look for instances where, perhaps, our expert has made assumptions or simply fails to make matters transparent to us?

The third reason this chapter is essential is because on many occasions you may be in need of an expert to minimise considerable risk, but there is no time or opportunity to positively identify one. Then the next best thing is confirming someone or some organisation at least shows the characteristics in keeping with being an expert. Is this person likely to be a good manager, or a team player? Does this organisation know what it is doing, is it likely to keep its promises? This chapter will explain what type of observations will provide helpful pointers.

Make that four

But there is more. Once you know what to look for, once your observations have led to predictions which were then confirmed, this chapter may have a still greater impact on your life than just giving you confidence someone will stick to the plan, or is indeed a good candidate for your project team. Also questions like “is this manager likely to listen to arguments”, “will this organisation embrace my creativity” and “what are the chances the weight of the office will change his behaviour” often can be answered with a fair degree of confidence.

This chapter may thus help you to align your expectations with your observations. This in turn may inform you on what best to do next, with whom to collaborate, whom to trust, whom to ignore, where to apply, when to commit, when to let go. This chapter may help you to make your life both easier and happier.

This chapter also will provide you with a tool that will help you to determine to what extent to trust your or somebody else’s “gut feelings” when having to make a choice. Finally, it suggests what to do next when you don’t like the observations you have made.

[spacer height=”20px”]

The behaviour of individuals and organisations can be forecasted

People’s behaviour is predictable. We have a fairly good idea how our friends, family and long time colleagues will react in certain situations. Over time we have made our observations and gained experience until we became pretty much experts in forecasting their reactions.

The behaviour of organisations is predictable in the same way too. This ability to forecast behaviour is very useful in the context of minimising risk. When you are in need of help it makes a world of difference if you can tell whether an individual or an organisation is indeed able to minimise risk for you.

Depending on both the number of observations made, and the “extremity” of the behaviour observed, this forecast can also be very reliable. An organisation that has no need for frequent, drawn-out, and poorly prepared meetings with many people attending is likely to have both clear aims and the expertise to achieve it. Someone who has a disregard for facts and a long track record of abusive behaviour is unlikely to change his behaviour and go the extra mile to get it right for everybody.

The key to all this is someone’s or some organisation’s ability to perceive new information and their intrinsic “hunger” to gain a better understanding of cause and effect.

[spacer height=”20px”]

To Perceive or not to Perceive

That is the question

Experts and expert organisations are able to minimise risk in their field of expertise. To be able to do so requires the ability to perceive information. This again is achieved through the combination of perceptiveness and experience, as described in the previous chapter.

Experience is something that is relatively easy to identify. Track records, CV’s, number of projects, customers, years in operation etc. etc. all communicate experience. Experience is important, but in the context of Risk Minimisation the degree of perceptiveness tends to be still more crucial. Risks tend to be more prevalent and have greater consequences in information environments which are very dynamic and where experience is difficult to be gained. Then it comes down to the ability to perceive changes and grasp the implications universal rules impacting upon them will have with respect to the desired outcome.

But how to identify, or measure, someone’s or an organisation’s degree of perceptiveness? How can we tell whether someone or some organisation is more or less likely to be able to minimise risks for us? To perceive, or not to perceive: that is the question we are to ask ourselves.

The nature of perceiving information

The information to be perceived consists out of two components: event conditions and universal rules. What does it take to perceive these? What qualities are we talking about?

In relatively stable information environments someone’s experience tends to play an important role in knowing what conditions to look out for to achieve a desired outcome. When these conditions change rapidly experience may not suffice and the ability to perceive these changes becomes more important. Experience may still help in identifying dependencies and patterns of change. Intelligence (the ability to acquire, understand, and use knowledge) may help, too, in registering how event conditions are impacted.

But there are plenty of examples, in practically any field you care to think of, of very intelligent and observing people who have little to no interest in minimising risks for others. The combination of observational strengths and intelligence allows one to see dependencies, to anticipate developments, and many see them merely as opportunities to be exploited.

To become an expert — with the ability to minimise risk for others as opposed to exploit opportunities for oneself — a certain type of curiosity is required. A drive to understand, an innate interest in discovering what is cause and what is effect. It is actually the more human thing to do. Not even our closest relatives, the chimpanzees, believe in cause and effect.

To an expert an observed effect is not to be exploited but to be linked to a cause. An expert sets out to identify the universal rule that is impacting on the conditions. This rule is then to be tested, to be understood, to be perfected. Only if the expert fails is the observed effect simply to be accepted.

The point to be brought across is that the ability to minimise risk for someone else (as opposed to achieving aims for oneself), is linked to a profound interest in cause and effect. This results in an awareness of how so many things are related. Minimising risk for someone else is thus both intimately and logically linked to an understanding that helping someone else is also, simply, always, naturally, in the expert’s own interest. To an expert everything he or she does in minimising risk for someone else is contributing to a win-win situation.

The degree of perceptiveness can be observed

In this chapter it will be explained how easily observed characteristics may inform us of someone’s or some organisation’s degree of perceptiveness. The premise is simple: the ability to observe new information is essential in becoming an expert. But this ability is not an isolated quality. It is a central element in most, if not all, of a person’s or organisation’s characteristics.

To give a couple of simple examples, perceptiveness is also expressed in holding open the door for someone who is carrying two hot cups of tea, to dispose of someone else’s litter in a public park, a company policy which takes their employees’ responsibilities towards their family into consideration, an organisation’s very low turnover rate.

[spacer height=”20px”]

The Expert Identification (EXPID) model

The Expert Identification model (or “EXPID model”) is fully congruent with the Kashiwagi Solution Model (KSM) as originally devised from the Yin and Yang concept by Dean Kashiwagi [1]. KSM is an original and powerful concept, which is here presented as the EXPID model in light of a slightly different logic, a different classification scheme, and its focus on Risk Minimisation. The name of the model is also fairly self-explanatory. This model is used to identify (yes/no/maybe) an expert: someone’s or some organisation’s ability to minimise risk in achieving your desired outcome.

The KSM model — and by inheritance the EXPID model — assumes and states the following:

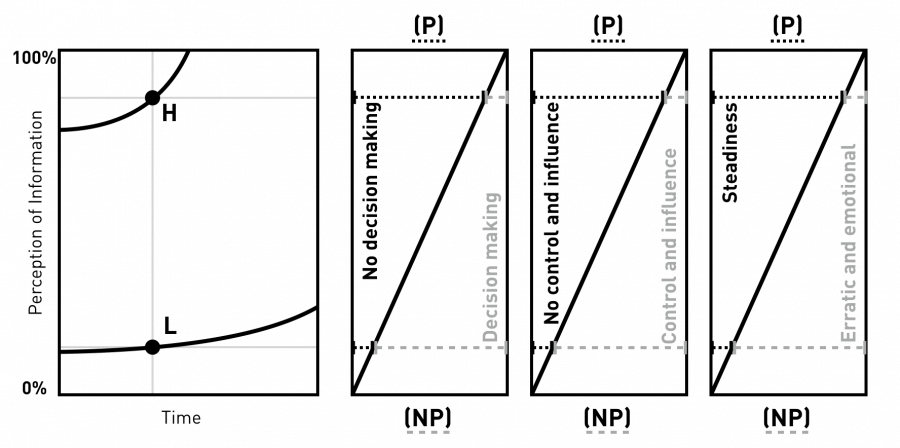

- The higher someone’s degree of perceptiveness, the faster the change rate in perceiving more and more information, the more information will be perceived (as indicated with the line with label ‘H’ in Figure 11, left).

- Vice versa, the lower someone’s degree of perceptiveness, the slower the change rate. Information will be perceived to a much lesser degree, and over time this improves only relatively slowly (as indicated with the line ‘L’ in Figure 11, left).

- A simple linear model can be used to link the degree of perceptiveness to how prevalent a certain characteristic is (see Figure 11, middle).

- The degree of perceptiveness of information is directly linked to the capacity to process, apply and use this information — and thus to minimise risk (see Figure 11, right).

- A range of characteristics of a person or of an organisation are all relative and related to the degree of perceptiveness/use of information.

- Based on a single and clearly observable characteristic, other characteristics which are linked to a similar level of perceptiveness can thus readily be assumed.

- The “power of prediction” (and thus the usefulness of the model in practice) is directly related to how extreme an observed characteristic is. For someone who consistently displays abusive behaviour other characteristics can be predicted with much more confidence than for someone who displayed mild abusive behaviour on only one or two occasions.

Figure 11.The Expert Identification (EXPID) model linking the extremes of a characteristic to perceiving a lot (P) or only little information (NP) at a given point in time. The ability to perceive information is directly linked with the ability to minimise risk. Someone who has a high (H) degree of perceptiveness will have a higher change rate (increasing the amount of information perceived over time) than someone who has a low (L) degree of perceptiveness.

In summary, the EXPID model equates the ability to perceive information to the ability to minimise risk. Based on the observation of characteristics the model allows one to predict whether someone or some organisation is more or less likely to be able to minimise risk.

In the EXPID model, based on the Event model and logic, three distinct “container” categories are identified. Each observed characteristic can be put into at least one of the three categories.

[spacer height=”20px”]

The three categories of the EXPID model

Using the Event model to predict behaviour

To minimise risk you must be able to look into the future. A desired outcome can be achieved against minimal use of resources if you can perceive the information pertaining to an event. In absence of sufficient perceptiveness and experience, especially in dynamic situations (including the dynamics that occur when people work together), the event’s outcome will remain unknown. This lack of expertise and the inability to predict outcomes results in a range of linked characteristics, for organisations and individuals alike.

Taking the Event model as a starting point three container categories are defined, each indicating opposite characteristics in the form of “consistent with minimising risk” versus “inconsistent with minimising risk”. The categories are (see also Figure 12):

- “No decision making” versus “Decision making”

- “No control and influence” versus Control and influence”

- “Steadiness” versus “Erratic and emotional”

Figure 12.The three container categories of the EXPID model. Each characteristic of an organisation or individual can be attributed to one or several of the categories. The more characteristics are observed, and the more ‘extreme’ these characteristics are, the more reliable the prediction of related characteristics becomes.

The categories, and the corresponding characteristics, are described in the sections below. For each category several examples are listed. These lists are far from complete, but also long enough to get the gist of it.

No decision making versus Decision making

Decisions are unsubstantiated choices which are made by those to whom a situation is not transparent. Decisions are made when insufficient information is perceived (lack of perceptiveness, lack of experience) or when the desired outcome is not defined in a transparent and unambiguous way.

Organisations or individuals who do not perceive sufficient information and or have no transparent aims tend to:

- Be unable to substantiate the choices they make (the definition of decision making)

- See their accomplishments as something special (to the point of expressing self-admiration)

- Believe they are unbiased and in the possession of plenty of “gut instinct” (in absence of alternatives)

- Change positions easily and don’t mind contradicting themselves (previous positions held were not tied to any analysis or logic)

- Demonstrate little to no self-reflection (there is basis for self-reflection)

- Obey strict hierarchy (so that it is clear who is entitled to make decisions)

- Have long response times (as hierarchy is to be strictly obeyed)

- Have many meetings (do not come to a conclusion easily)

- Have many management layers (drawn-out chain of decision making with limited accountability)

- Have meetings with many people (unclear where the expertise is, hierarchy important, several layers represented)

- Prepare poorly for meetings (purpose often unclear, not required for decision making)

- Have many managers instructing others what to do and how to do it (no other way of knowing what to do, unsure about available expertise)

- Be inefficient (don’t have good idea of how and where to use what resources)

- Have extensive detailed internal communications in form of emails, reports, documents etc. (constant need to clarify, to search, to confirm)

- Spend large resources on marketing and public relations (to overcome lack of expertise, in absence of transparent aim)

- Lack clarity in message and focus in communicating with outside world which generally is a combination of marketing statements and (technical) details (in absence of transparent aim and demonstrable expertise)

- Be unable to measure their performance (no transparent aims)

- Be surprised and quick to use excuses when missing targets (for not perceiving sufficient information)

- Rely heavily on inspection (unclear about presence and utilisation of expertise)

- Focus on internal rather than external risks (lack expertise, perceive little information)

- Work towards achieving minimum standards (lack expertise to work towards high performance)

- React to and follow the market (unable to perceive developments)

- Focus on tactics rather than strategy (idem)

- Never become expert organisations (change requires the perception of information)

In Figure 13 examples of EXPID model characteristics for the no decision making/decision making category are shown.

Figure 13. Examples of opposite characteristics linked to either ‘no decision making’ or ‘decision making’.

The APTC project used the method of Best Value Procurement to procure equipment and servicing for an estimated sum of over 75 MEUR (more on this in the chapter “Decision Free Procurement”). The vendors participating in the tender were strongly advised to involve someone with experience in this method of procurement. At the dialogue session with the vendors it turned out “Vendor C” was the only vendor not to have followed up on this advise. Six weeks before they were to hand in their tender documents they had little to no understanding of what was expected of them. The purpose of the dialogue session — an opportunity for them to ask us questions to better understand (the context of) our desired outcome — was also lost on them. Instead they presented the technical ability of their solution, ignoring our requests to clarify how the described functionality was in support of achieving our desired outcome. The tender documents showed that they were not able to change course in the six weeks that followed. In the evaluation meeting following the announcement of the ranking of the vendors it was explained to them why they had scored so poorly and ranked last. The employees of Vendor C who had written the tender documents accepted the evaluation. They had realised too late what was expected of them, they had had to deal with multiple tenders and deadlines at the same time, and they had had to contend with superiors telling them what to put in the documents. All of which contributed to a poor performance. Which did not stop Vendor’s C higher management to institute interim injunction proceedings against APTC only days later. These proceedings were instituted because higher management was unwilling to accept the fact that not only Vendor B but also Vendor A had outranked them. They demanded a reevaluation. Which later turned into a request to redo the tender using a traditional tender method. As their lawyers also failed to grasp what APTC had asked for in the tender, their demands were all denied in court. When, several months later, I spoke with one of Vendor C’s executives, asking him what they had learned from the experience, he replied that their evaluation showed that they had made the wrong person responsible, and that they had now dealt with that. Vendor C is a renowned company and a market leader in several fields involving complex technology, employing hundreds if not thousands of expert engineers and physicists. Vendor C also displays many characteristics of a culture of decision making. The ability to make advanced and technologically complex products and solutions is not to be equated with the ability to minimise risk in achieving a client’s formulated desired outcome. Something the Apollo Program also learned at the cost of the lives of three astronauts (more about this in the chapter “Decision Free Procurement”). |

[spacer height=”20px”]

No control and influence versus Control and influence

The Event model states that any event has only one outcome, and that this outcome is determined by the event conditions and universal rules. Following this logic there is no “controlling” or “influencing” an event towards another outcome. There is no controlling or influencing somebody’s skills and characteristics, his/her ability to perform a certain task, or his/her intrinsic motivation.

Organisations and individuals who do not oversee event conditions, who have a poor understanding of the workings of relevant universal rules, and who (thus) uphold a belief in “controlling and influencing” their way to achieve a certain outcome, tend to:

- Rely on rules and protocols (telling employees within what confines how to do their work)

- Have many staff functions (producing rules, guidelines and protocols)

- Have many management layers (to have closer control over employees and teams)

- Rely on relationships, trust and loyalty (as ways to control and influence)

- Attach great value to contracts (as a tool to control and influence performances)

- Spend many resources on legal support (as a form of security, to ‘enforce’ what is believed to be the right outcome of an event)

- Use extensive and long-term incentive programs (to control and influence motivation)

- Value status, prestige, authority and seniority (as tools of control and influence)

- Work long hours and (as part of a “controlled” environment)

- Have little interest in work-life balance (feeling of importance at work, worried to lose control)

- Greatly overestimate the importance of the individual in organisational performances

- Greatly underestimate the importance of the organisation’s structure and culture

- Readily apportion blame and praise to individuals

- Readily criticise others for lacking motivation or having secret agenda’s

- Believe in possibility of different outcomes in identical situations

- Don’t learn lessons/new universal rules (focus is on the individual, not the environment)

In Figure 14 examples of EXPID model characteristics for this category are shown.

Figure 14. Examples of opposite characteristics linked to either ‘no control and influence’ or ‘control and influence’.

The concept of “no control and influence” as used here concerns someone’s expertise, ability, or intrinsic motivation. That people’s behaviour can be controlled and influenced goes without saying. An interesting example of changing people’s behaviour comes from T. Sharot’s “The influential mind” [18]. When, on an intensive care unit in New York, cameras were mounted over soap dispensers to get medical personnel to wash their hands more consistently, this assorted no effect. Only one in ten followed the guidelines, even knowing they were being filmed. When electronic displays were put up instead, with up-to-date information on the percentage of personnel who had washed their hands, the compliance rate increased to nine out of ten. In this example employees did not alter their behaviour when they were merely “controlled” (i.e. confronted with someone’s decision to mount cameras — a measure that lacked substantiation as to how it would contribute to a stated goal). But when they received instant, relevant, and transparent (easy to understand) information reflecting the performance of everybody on the unit, including their own, behaviour changed dramatically. An example for how “control” does little to nothing to improve performance, whereas providing transparent and relevant performance information (avoiding any need for decision making as to the why or how or for whom) can result in spectacular organisational improvements. |

[spacer height=”20px”]

Read the rest of the article in this PDF. Remaining sections are:

- Steadiness versus Erratic and emotional

- A guide to trusting feelings

- Who is to make the decision?

- “I can’t explain it, but this is what I propose”

- “It is up to you; what do you prefer?”

- “It just feels right”

- The Choice Making diagram